Decoding Your Year in Sound: A Technical Guide to Spotify Wrapped 2025 Highlights

Overview

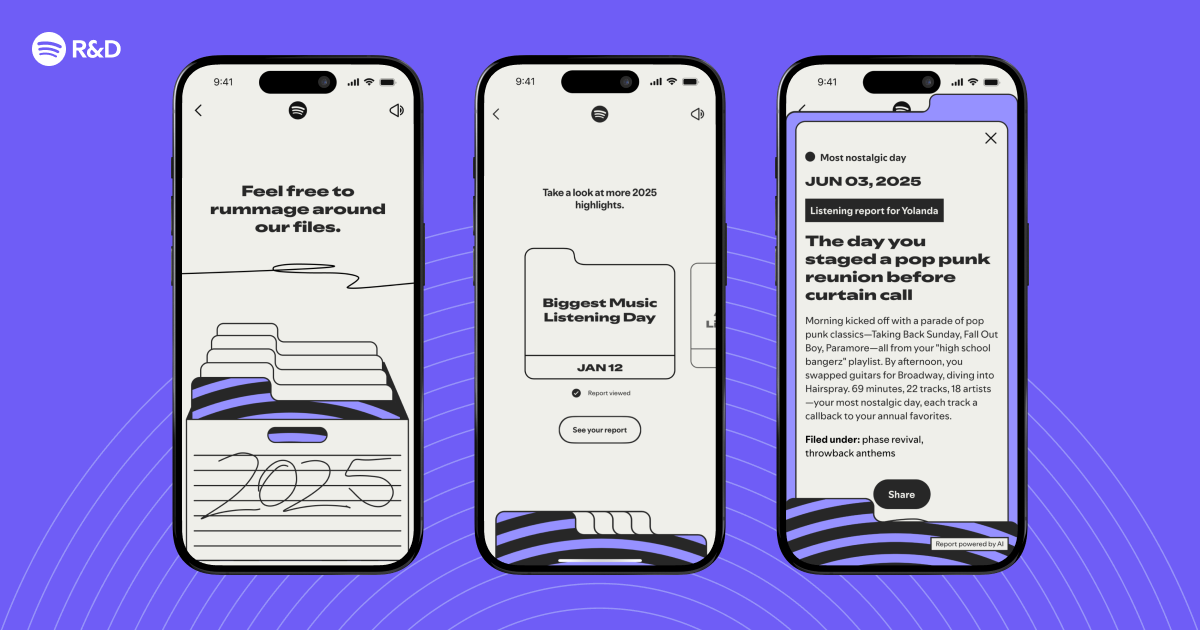

Spotify Wrapped has become an annual ritual for millions, offering a personalized snapshot of a year’s listening habits. For 2025, the experience went deeper—moving beyond simple stats to surface interesting listening moments and weave them into a narrative. This guide unpacks the technology behind those highlights, from data pipelines to storytelling algorithms. Whether you're a data engineer, ML enthusiast, or curious user, you’ll understand how Spotify identifies and curates those “aha” moments from your audio history.

Prerequisites

Before diving into the tech stack, you should be familiar with:

- Basic data engineering concepts (ETL pipelines, batch processing)

- Familiarity with machine learning (especially clustering and recommender systems)

- Understanding of user behavior analytics (sessions, events, frequency)

- Knowledge of Spark or similar big data frameworks (optional)

- Spotify API basics (to understand data sources)

Step-by-Step Guide to Building Listening Moment Highlights

1. Collect and Preprocess Listening Data

Spotify ingests billions of streaming events daily. Each “play” event includes: user ID, track ID, timestamp, device context, and engagement signals (skips, replays, completions). For Wrapped, data from the entire calendar year is aggregated. Key steps:

- Extract raw events from distributed logs (Kafka, GCS).

- Filter to private listening sessions only (excluding shared sessions).

- Normalize timestamps to user’s local timezone for accurate “moment” detection (e.g., late‑night binges).

- Remove bot traffic and outlier accounts (e.g., machines playing 24/7).

2. Define “Interesting Listening Moments”

Not every play is story‑worthy. Engineers define heuristics based on behavioral signals:

- Unusual density: Many plays of one artist in a short window.

- Context change: Shifts from calm to energetic genres within minutes.

- Rediscovery: Re‑listening to an old favorite after months.

- First‑ever play: Debut of a new genre or artist.

Each signal is scored using a weighted formula. Thresholds are tuned via user‑testing to ensure moments feel surprising but relatable.

3. Feature Engineering for Moment Detection

Raw events are transformed into session‑level features:

- Sliding windows (1‑hour, 6‑hour, 1‑day) to capture intensity.

- Audio features from Spotify’s API (e.g., danceability, energy, valence) to detect mood swings.

- Time‑series aggregates: rolling counts of new vs. repeat tracks.

- Sequence sequences: transition probabilities between genres/artists.

These features feed a moment classifier—a gradient‑boosted model trained on curated labels (human‑rated “interesting” vs. “boring” windows).

4. Cluster Moments into Stories

Individual moments are then grouped into cohesive narratives using clustering (DBSCAN with temporal and semantic constraints). For example:

- “Summer road trip” cluster: high energy, same top artists, repeated in July.

- “Late‑night study phase” cluster: classical playlist, low valence, fixed time of day.

Each cluster gets a descriptive label based on dominant tracks, genres, and timestamps. A natural‑language generation (NLG) module turns the cluster into a sentence: “You listened to Chill Lofi during 17 late nights this year.”

5. Personalize the Narrative Flow

The final Wrapped story is not random—it’s ordered for emotional impact. A reinforcement learning (RL) agent sequences the clusters:

- Start with a strong, surprising moment (hook).

- Followed by reflective period (mid‑year nostalgia).

- End with current listening trends.

Rewards are based on user engagement with previews (A/B tested). The RL policy is updated using historical Wrapped interactions.

6. Generate and Deliver the Experience

Once sequences are done, the pipeline:

- Renders animated cards with album art and audio extracts.

- Caches results in CDN.

- Delivers via mobile app’s Wrapped tab on December 1st.

All processing is run in Apache Beam on Google Cloud Dataflow, with final scoring in TensorFlow serving containers.

Common Mistakes

Over‑Engineering Moment Definitions

Using too many heuristics can yield false positives (e.g., a boring commute becomes a “dramatic moment”). Fix: Use a simple classifier as a primary filter, with heuristics as secondary signals.

Ignoring User Control

Some users want to see all moments, not just curated ones. The 2025 version added a “show all moments” toggle. Lesson: Always provide an unfiltered view for power users.

Data Leakage in Clustering

Using future data to label past moments (e.g., including December’s plays when scoring July). Fix: Strictly partition by month before feature extraction.

Narrative Fatigue

Too many clustered stories can overwhelm. Fix: Cap the number of highlights (typically 5–7) and use clustering distance threshold to merge small groups.

Summary

Spotify Wrapped 2025’s highlights rely on a multi‑stage pipeline: collecting global listening data, detecting interesting moments via machine learning, clustering them into thematic stories, and finally ordering them for maximum user delight. The tech stack is built on cloud data processing (Beam, Dataflow) and customizable ML models that balance surprise with personalization. By understanding the architecture, developers can apply similar approaches to create narrative‑driven analytics in other domains.