10 Critical Data Quality Issues in ML, Generative AI, and Agentic AI

When AI projects fail, the culprit is almost never the algorithm—it's the data. In traditional machine learning, data quality problems are often caught before they cause major damage. But with generative AI and agentic AI, the stakes are far higher. A chatbot can confidently answer with wrong information, or an autonomous agent can commit budget based on incomplete records, all without any warning. This article explores ten key things you need to know about how data quality impacts modern AI systems.

1. The Hidden Cost of Bad Data

Nobody builds a bad model on purpose. Yet poor data quality is the most common reason AI projects stall, drift, or fail silently in production. A pricing model might ship with a $2.3 million margin shortfall simply because the training data looked clean until it wasn't. In traditional ML, such failures are at least visible—dashboards show the wrong number, an analyst catches it, and a retrain fixes the issue. But the cost is still real, and it's only the beginning.

2. Why Traditional ML Failures Are Visible

Classic machine learning operates in a predictable cycle: model → prediction → review. When data quality slips, the output becomes obviously wrong—a regression line shifts, a classification score drops. This visibility allows teams to contain the damage quickly. For example, a credit risk model that suddenly starts approving bad loans triggers an alert. The root cause (e.g., missing fields or outdated records) is traceable. This familiar relationship between ML and data quality gives engineers a safety net.

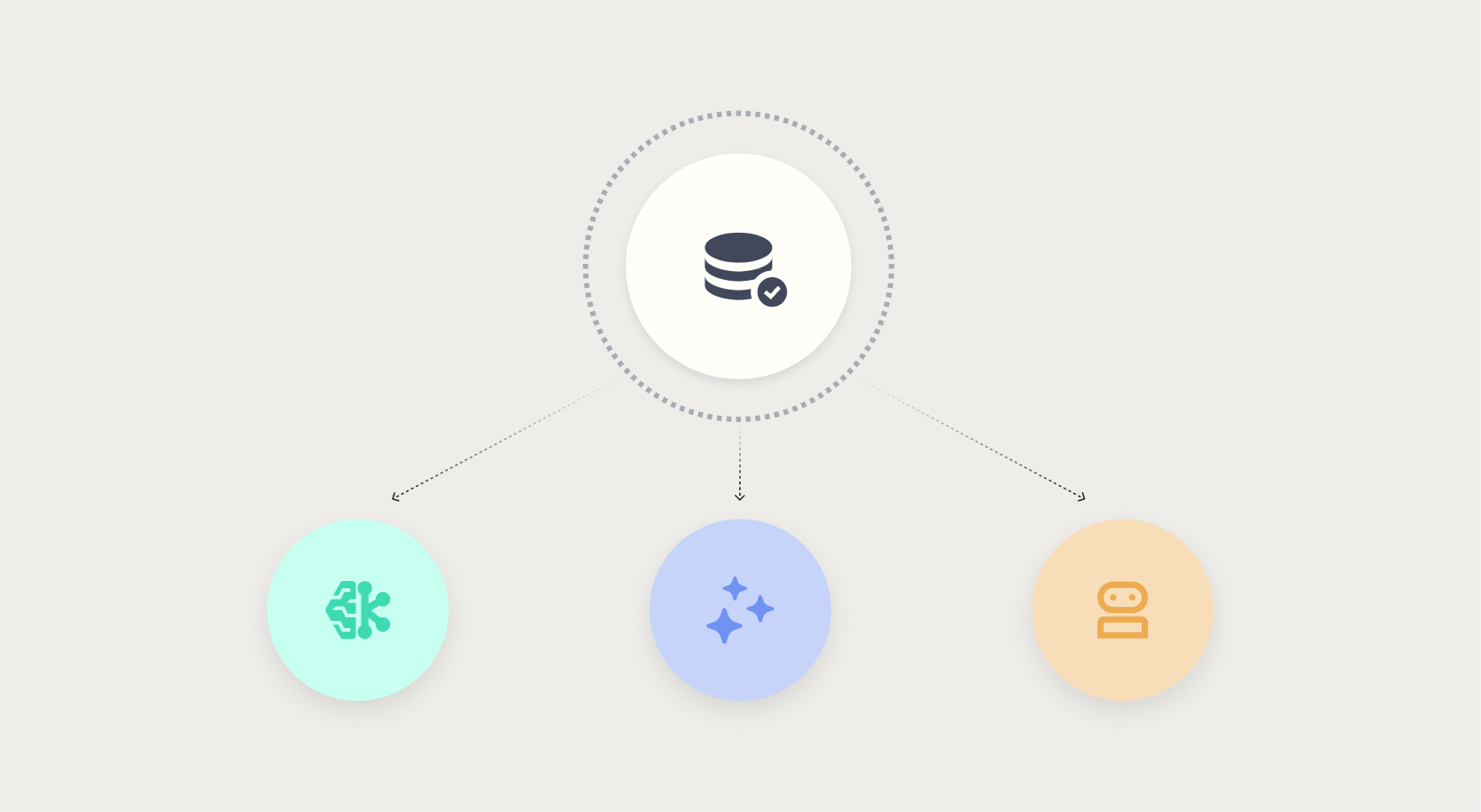

3. When AI Moves from Prediction to Action

Generative AI and agentic AI break that containment. Instead of just predicting, these systems act—they generate text, make decisions, execute tasks. A chatbot pulling from a stale knowledge base delivers a confident, wrong answer with no signal that anything is wrong. An autonomous procurement agent commits budget on incomplete supplier data before anyone reviews it. The AI operated exactly as designed, on data that was never fit for purpose. The further AI moves from prediction to action, the less tolerance there is for data quality failures.

4. The Data Trust Gap

Trust in AI output is only as strong as the data behind it. When data quality is poor, trust erodes silently. In generative AI, a model might produce plausible-sounding but factually incorrect text because it relied on noisy or biased training data. In agentic AI, an autonomous system might act on outdated or incomplete information, leading to costly mistakes. The gap between expected and actual performance widens, but because the AI still appears to function normally, humans don't intervene until it's too late.

5. How Stale Knowledge Bases Sabotage Chatbots

Consider a customer service chatbot that uses a knowledge base updated quarterly. If a product change occurs mid-quarter, the chatbot continues to answer based on old information. It doesn't know it's wrong—it's just statistically likely. Users get confident wrong answers, frustration builds, and the brand suffers. Unlike a human agent who might say “I'm not sure,” the bot never hesitates. Data freshness is critical for generative AI, but it's often the first thing neglected.

6. Autonomous Agents Acting on Incomplete Data

Agentic AI systems—like automated procurement agents or supply chain bots—make decisions without human oversight. If they're fed incomplete supplier records, they may approve orders from unvetted vendors or miss discounts. The damage is immediate and financial. Because the agent acts autonomously, there's no one to question the data until the budget is already committed. This is a new failure mode: the AI didn't malfunction, the data did.

7. The $2.3M Margin Shortfall Example

In one real-world case, a pricing model for a retail company caused a $2.3 million margin shortfall. The model was trained on historical data that had a subtle data quality issue: missing shipping costs for certain regions. The model learned to assume lower costs, leading to underpriced products. By the time the error was caught, thousands of orders had been processed. This illustrates how even small data quality problems can cascade into massive financial losses in machine learning systems.

8. No Signal of Failure

Perhaps the scariest aspect of AI failures caused by data quality is the lack of a warning signal. A model might maintain high accuracy metrics while being completely wrong on edge cases. For generative AI, there's no dashboard showing “this answer is 80% likely to be false.” For agentic AI, no red flag appears when an agent acts on stale data. The AI appears to be working perfectly—until the damage surfaces. This makes detection and prevention extremely difficult.

9. The Illusion of Clean Data

Many teams assume their data is clean because it passes basic validation checks. But data quality isn't binary—it's contextual. A dataset might be clean for one use case but disastrous for another. For example, customer addresses might be formatted correctly for mailing but unusable for geographic analysis because they lack precision. When the same data is reused across ML, generative AI, and agentic AI projects, these hidden flaws multiply. Teams must continuously assess fitness for purpose.

10. Building Data Fitness into AI Projects

To survive the transition from prediction to action, organizations need systematic data quality processes. This means profiling data at every stage, monitoring for drift, implementing automated checks for completeness and freshness, and creating feedback loops where models flag their own uncertainty. It also requires a cultural shift: treating data as a product, not a byproduct. When data is fit for purpose, AI can be trusted to act—otherwise, the silence before failure is deafening.

Conclusion: Data quality is not a one-time checklist; it's an ongoing discipline that must evolve with AI's increasing autonomy. Traditional ML gave us warning signs, but generative and agentic AI erase them. The only defense is to build data fitness into the core of every AI project, from inception to production. Without it, the cost of silent failure will only grow.